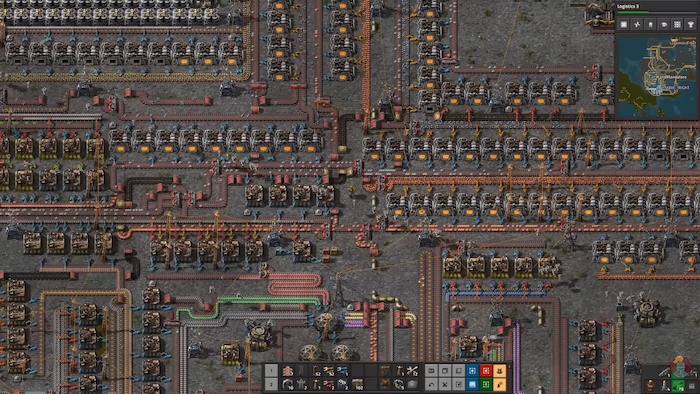

I used to play a lot of Factorio. It's a game about building a factory. You design swarms of tiny robots to build the parts you need to construct a rocket that lifts you off the planet. The whole game is about designing systems that run themselves.

OpenClaw is real-life Factorio. You can create your own swarm of tiny robots to automate every task. All the stuff we used to do on a computer - email, calendars, server management, passwords - can now be performed automatically by writing a Markdown file and dropping it in a skills directory. This is a breakthrough.

But OpenClaw skills don't exist in a closed, virtual world. Anything a human can do on a computer, OpenClaw can do too. This is a feature. We want our agents to actually do things. The problem is that, just like a human, there is no way to fully know what your agent will do before you let it run.

What "Normal" Looks Like

As part of my own exploration into OpenClaw, I surveyed a random sampling of recently published skills on ClawHub. Skill after skill exhibited behavior that would set off alarms in any other context. Installing background daemons. Creating system services. Writing cron jobs. One developer published four separate skills interfacing with 1Password within a few hours.

This is what normal looks like in the land of OpenClaw.

None of this is (necessarily) malicious. A skill that manages your server should install a system service. A skill that handles your passwords needs access to your password manager. This is exactly the problem. The behaviors we'd normally flag as malicious are the same behaviors that make skills useful. There is no signature that separates a helpful skill from a harmful one. They do the same things for different reasons.

The Defenses

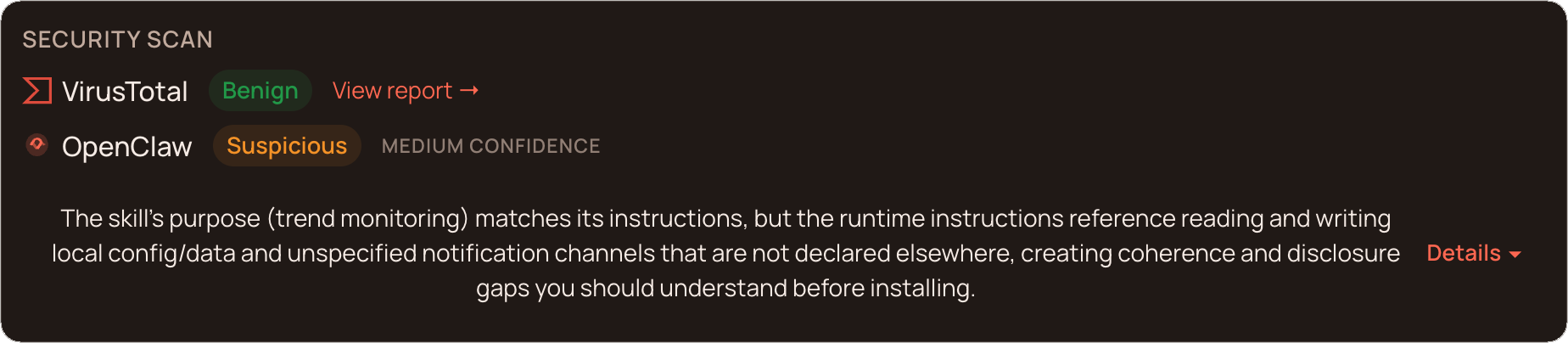

ClawHub runs skills through VirusTotal and an LLM-based security review that reads skill code and flags suspicious patterns. These are reasonable measures. VirusTotal catches known malware signatures. The LLM scan catches dangerous code patterns and what the OpenClaw team calls "incoherence" - i.e., the skill doing things that don't match what it says it's supposed to do.

These defenses face an impossible task. How do you distinguish between a skill that installs a daemon to monitor your server and one that installs a daemon to steal your data? The operations are identical. The permissions are identical. The code is often identical. Only the intent is different, and intent doesn't show up in a scan.

What's more, many skills call external APIs (that's why they're useful). A skill that calls an external API can receive new instructions at runtime. The code that passes every review today can behave differently tomorrow because the API it talks to sent a different response. No amount of pre-installation scanning accounts for what happens after installation.

Why You Can't Just Sandbox It

The obvious response is to sandbox it. Just run OpenClaw in a container! Restrict its access! Limit the blast radius!

Think about what these skills actually need to do. They manage your email. Update your calendar. Control your browser. Interact with your password manager. Install packages. Hit APIs. The entire value of an AI agent is its access to your system. Lock it in a box with no network and no system access and it can't do its job.

But that doesn't mean sandboxing is wrong. It means naive sandboxing is wrong. The goal isn't to cut the agent off from the world. It's to give it a real environment with clear, controlled points of ingress and egress. Let it install packages. Let it call APIs. But define exactly which domains it can reach, deny everything else, and log every connection attempt.

What We're Building Instead

Scanning is the wrong paradigm for agentic software. Traditional security scanning assumes the threat is in the artifact. The file. The binary. The package. With agent skills, the threat is in the behavior, at runtime, in context. The artifact can be completely clean.

The security boundary can't be the skill. It has to be the execution environment. Not "is this code safe?" but "what is this code doing right now, and does the operator know about it?"

Concretely, that looks like hardened environments where agents run on real machines with real network access, but with egress controls enforced at the infrastructure level. Allowlist the domains the agent needs. Deny everything else. Log every outbound connection — allowed or denied. Start in a permissive mode, watch what the agent actually does, then build a precise policy from observed behavior instead of guesswork.

Agents should be able to install daemons, manage credentials, and call external APIs. That's what makes them useful. But every one of those actions should be visible, logged, and subject to a policy the operator defined. And when something does go wrong — because eventually it will — rolling back to a known-good state should be trivial.

The Bottom Line

OpenClaw's own maintainer put it well: "If you can't understand how to run a command line, this is far too dangerous of a project for you to use safely."

I'd go further. Even if you understand how to run a command line, you can't fully audit what a skill does before running it. Not because you're not smart enough, but because the information doesn't exist until runtime.

The answer isn't "don't use agents." The answer is to build infrastructure that makes agents safe to run. Not by restricting what they can do, but by watching what they actually do.

That's what we're building at Iron.sh.

I'm Matthew, founder of Iron.sh. If your team is running agents and thinking about security, reach out.